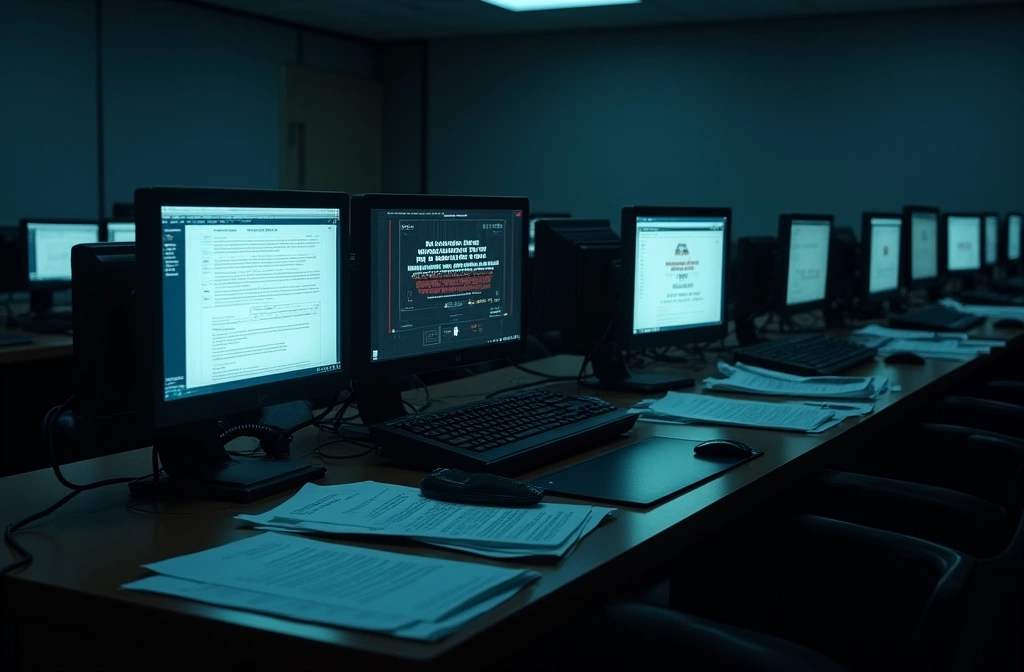

CyberSecurityNews reported that a threat actor leveraged Claude and ChatGPT to breach multiple U.S. government agencies. The incident, first detected April 12, 2026, underscores a critical vulnerability: the intersection of widely accessible AI tools and inadequate endpoint/prompt security protocols.

This matters because government agencies operate under the assumption that classified networks are air-gapped or at minimum isolated from consumer-grade tooling. The breach suggests either: (1) personnel are using public AI to draft or process sensitive information before transferring to secure systems, (2) AI-generated social engineering or code is bypassing traditional security gates, or (3) a combination of both.

The risk multiplier is scale and accessibility. Unlike specialized exploit frameworks, Claude and ChatGPT are designed for broad adoption—making them low-friction entry points for reconnaissance, credential harvesting prompt injection, and malware drafting. Attackers face no technical barriers; they operate within the tools' designed parameters.

For preparedness readers: This is not a failure of AI companies, but a governance gap. Organizations handling sensitive information must implement explicit policies around public LLM use, including:

Immediate steps:

- Audit employee access logs for AI tool usage on networked systems

- Enforce air-gapped workflows for classified or high-value data

- Deploy DLP (Data Loss Prevention) rules blocking copy-paste of sensitive context into public services

- Train staff on prompt injection and social engineering using AI personas

Watch for: Formal government guidance or directives restricting AI tool access on federal networks. Precedent exists (USB bans, cloud restrictions); similar controls may follow.

Severity remains low because no official breach confirmation or data theft scope has been disclosed. Track official CISA or agency statements for confirmation and scope details.